A Library To Couple PyTorch ML Models With Fortran Climate Models

2025-10-23

Weather and Climate Models

Large, complex, many-part systems.

Parameterisation

Subgrid processes are largest source of uncertainty

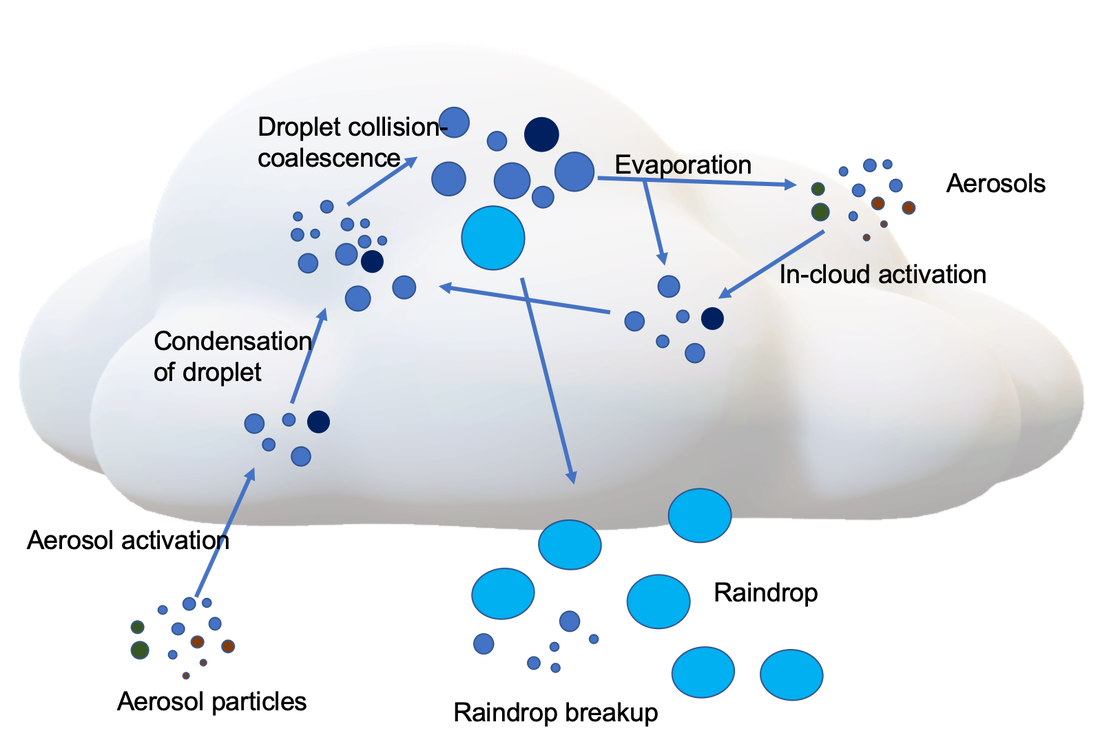

Microphysics by Sisi Chen Public Domain

Staggered grid by NOAA under Public Domain

Globe grid with box by Caltech under Fair use

Parameterisation

Subgrid processes are largest source of uncertainty

Microphysics by Sisi Chen Public Domain

Staggered grid by NOAA under Public Domain

Globe grid with box by Caltech under Fair use

Hybrid Modelling

Neural Net by 3Blue1Brown under fair dealing.

End-to-End

https://www.microsoft.com/en-us/research/project/aurora-forecasting/

Language interoperation

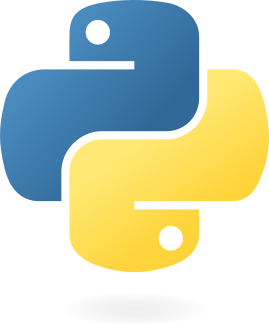

Many large scientific models are written in Fortran (or C, or C++), but machine learning is (mostly) conducted in Python.

![]()

![]()

Mathematical Bridge by cmglee used under CC BY-SA 3.0

PyTorch, the PyTorch logo and any related marks are trademarks of The Linux Foundation.”

FTorch

- PyTorch has a C++ backend and provides an API.

- Binding Fortran to C is straightforward1 from 2003 using

iso_c_binding.

We will:

- Provide a Fortran API

- wrapping the

libtorchC++ API - abstracting complex details from users

- wrapping the

- Save the PyTorch models in a portable Torchscript format

- to be run by

libtorchC++

- to be run by

Approach

Python

env

Python

runtime

xkcd #1987 by Randall Munroe, used under CC BY-NC 2.5

Highlights - Developer

Easy to build and link using CMake,

- or link via Make like NetCDF

User tools

pt2ts.pyaids users in saving PyTorch models to Torchscript

Examples suite

- Take users through full process from trained net to Fortran inference

Full API documentation online at

cambridge-iccs.github.io/FTorchFOSS, licensed under MIT

- Contributions via GitHub welcome

Find it on :

Highlights - Computation

- Use framework’s implementations directly

- feature and future support, and reproducible

- Make use of the Torch backends for GPU offload

CUDA,HIP,MPS, andXPUenabled

Find it on :

Highlights - Computation

- Indexing issues and associated reshape1 avoided with Torch strided accessor.

- No-copy access in memory (on CPU).

Find it on :

Saving model from Python

import torch

import torchvision

# Load pre-trained model and put in eval mode

model = torchvision.models.resnet18(weights="IMAGENET1K_V1")

model.eval()

# Create dummmy input

dummy_input = torch.ones(1, 3, 224, 224)

# Save to TorchScript

if trace:

ts_model = torch.jit.trace(model, dummy_input)

elif script:

ts_model = torch.jit.script(model)

frozen_model = torch.jit.freeze(ts_model)

frozen_model.save("/path/to/saved_model.pt")

TorchScript

- Statically typed subset of Python

- Read by the Torch C++ interface (or any Torch API)

- Produces intermediate representation/graph of NN, including weights and biases

tracefor simple models,scriptmore generally- Soon to be deprecated - ExecuTorch?

Fortran

use ftorch

implicit none

real, dimension(5), target :: in_data, out_data ! Fortran data structures

type(torch_tensor), dimension(1) :: input_tensors, output_tensors ! Set up Torch data structures

type(torch_model) :: torch_net

integer, dimension(1) :: tensor_layout = [1]

in_data = ... ! Prepare data in Fortran

! Create Torch input/output tensors from the Fortran arrays

call torch_tensor_from_array(input_tensors(1), in_data, torch_kCPU)

call torch_tensor_from_array(output_tensors(1), out_data, torch_kCPU)

call torch_model_load(torch_net, 'path/to/saved/model.pt', torch_kCPU) ! Load ML model

call torch_model_forward(torch_net, input_tensors, output_tensors) ! Infer

call further_code(out_data) ! Use output data in Fortran immediately

! Cleanup

call torch_delete(model)

call torch_delete(in_tensors)

call torch_delete(out_tensor)Online training and autograd

Work led by Joe Wallwork

To date FTorch has focussed on enabling researchers to run models developed and trained offline within Fortran codes.

However, it is clear (Mansfield and Sheshadri 2024) that more attention to online performance, and options with differentiable/hybrid models (e.g. Kochkov et al. 2024) is becoming important.

Pros:

- Avoids saving large volumes of training data.

- Avoids need to convert between Python and Fortran data formats.

- Possibility to expand loss function scope to include downstream model code.

Cons:

- Difficult to implement in most frameworks.

Expanded Loss function

Suppose we want to use a loss function involving downstream model code, e.g.,

\[J(\theta)=\int_\Omega(u-u_{ML}(\theta))^2\;\mathrm{d}x,\]

where \(u\) is the solution from the physical model and \(u_{ML}(\theta)\) is the solution from a hybrid model with some ML parameters \(\theta\).

Computing \(\mathrm{d}J/\mathrm{d}\theta\) requires differentiating Fortran code as well as ML code.

Implementing AD in FTorch

- Expose

autogradfunctionality from Torch.- e.g.,

requires_gradargument andbackwardmethods.

- e.g.,

- Overload mathematical operators (

=,+,-,*,/,**).

- Optimizers

- Expose

torch::optim::SGD,torch::optim::AdamWetc., as well aszero_gradandstepmethods. - This already enables some cool AD applications in FTorch.

- Expose

- Loss functions

- We haven’t exposed any built-in loss functions yet.

- Implemented

torch_tensor_sumandtorch_tensor_mean, though.

FTorch: Summary

- Use of ML within traditional numerical models

- A growing area that presents challenges

- Language interoperation

- Exploring options for online training and AD

- Torch

autogradandoptimizerexposed usingiso_c_binding. - Work in progress on setting up online ML training.

- Torch

- Moving away from TorchScript…

Thanks for Listening

Get in touch:

Thanks to Jack Atkinson, Joe Wallwork, Tom Meltzer,

Elliott Kasoar, Niccolò Zanotti and the rest of the FTorch team.

The ICCS received support from

FTorch has been supported by

References